How to build an AI agent with LLM fine-tuning in Jitterbit Harmony

Introduction

This guide shows you how to build an AI agent with a fine-tuned Large Language Model (LLM) in Jitterbit Harmony using Studio. The guide uses OpenAI as the example LLM provider, but you can adapt these steps for other providers such as Google Gemini or AWS Bedrock. The core concepts remain the same, but the configuration steps may differ.

Tip

For learning purposes, reference the OpenAI Fine-Tuned Agent provided through Jitterbit Marketplace for an implementation of this guide.

Build an AI agent with LLM fine-tuning

-

Create a new Studio project:

- Log in to the Harmony portal and select Studio > Projects.

- Click New Project. A Create New Project dialog opens.

- In the dialog, enter a Project name such as

AI Agent - OpenAI Fine-tuning, select an existing environment, and click Start Designing. The project designer opens.

-

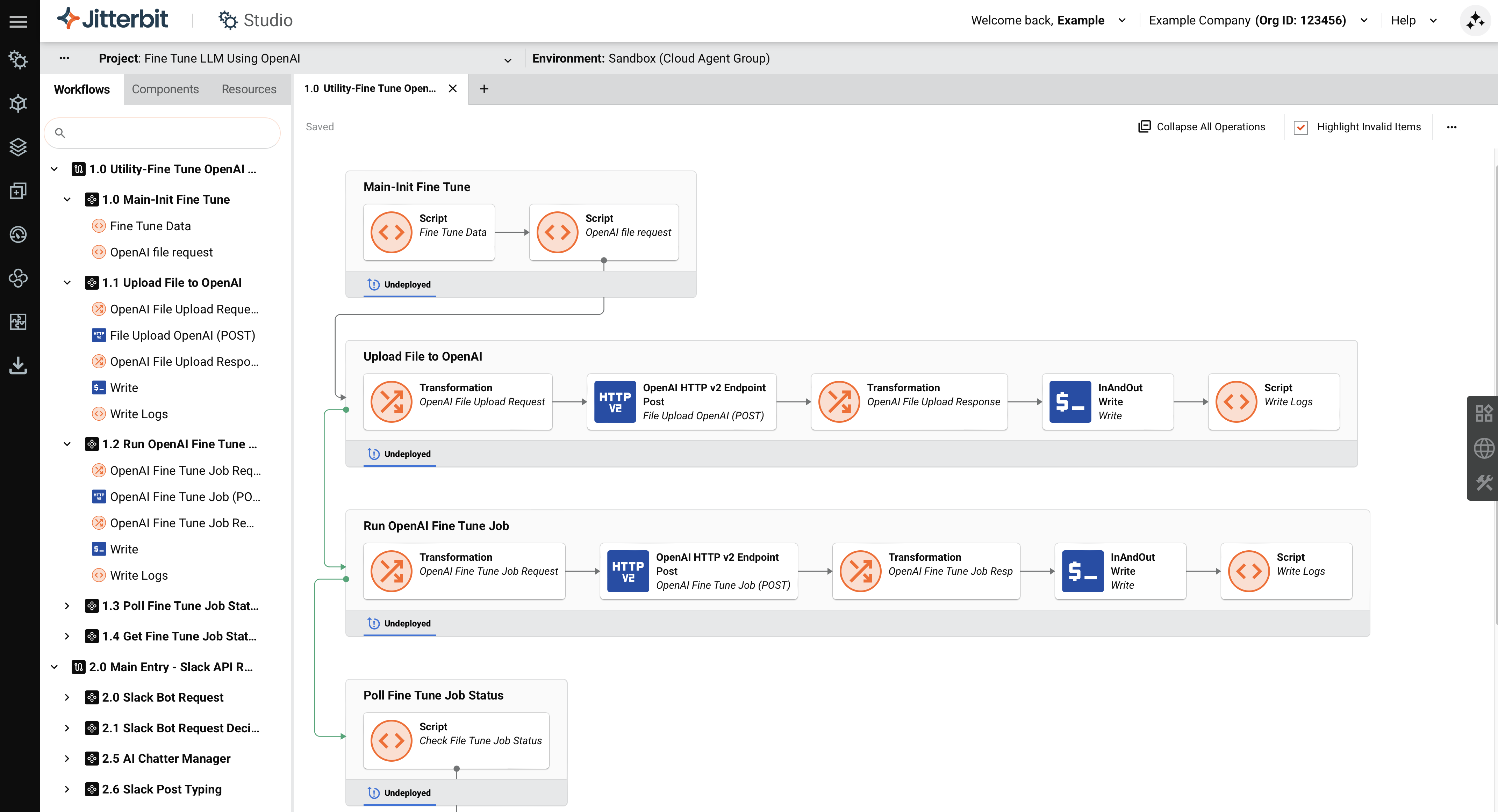

Create a fine-tuning utility workflow to train the LLM with your organization's data:

-

In the project designer, double-click the default workflow name and enter a name, such as

Utility - Fine Tune OpenAI Model. -

Use the HTTP v2 connector to create an endpoint for your LLM provider. This example uses OpenAI, but you can configure the HTTP v2 connector for any provider's API. In the Project endpoints and connectors tab, click the OpenAI endpoint to show its activity types.

-

Create an operation to upload training data and start the fine-tuning job. You need to prepare your training data according to your LLM provider's requirements. This example uses JSONL (JSON Lines) format, where each line represents a single training example. OpenAI requires at least 10 examples but recommends at least 50 examples for better results. Consult your provider's documentation for specific format requirements and minimum training data recommendations.

The following example shows the JSONL schema format.

{"messages": [ {"role": "user", "content": "What was the top employee-requested feature in the 2025 internal IT satisfaction survey?"}, {"role": "assistant", "content": "The most requested feature in the 2025 IT satisfaction survey was single sign-on integration for all internal tools."} ]} {"messages": [ {"role": "user", "content": "In the Q3 Workplace Environment Survey, what did employees rate as the lowest performing area?"}, {"role": "assistant", "content": "The lowest performing area in the Q3 Workplace Environment Survey was the availability of quiet workspaces for focused tasks."} ]} -

Create a script to assign your training data to a variable, such as

$InAndOut. This variable stores your JSONL training data and is used in the subsequent operations to upload the file to OpenAI and initiate the fine-tuning job. -

Create an operation to monitor the fine-tuning job status. After the job completes, retrieve the fine-tuned model ID from the OpenAI fine-tune dashboard. You use this model ID when you configure the AI logic workflow to send queries to your fine-tuned model.

-

-

Create an API request handler workflow to receive user queries from your chat interface:

-

Click Add New Workflow to create a new workflow.

-

Rename the workflow to

API Request Handler. -

In the Project endpoints and connectors tab, drag the Request activity type to the drop zone on the design canvas.

-

Double-click the API Request activity and define a JSON schema appropriate for your chat interface.

-

Add a transformation to map data fields from the request to the format your AI agent requires. Refer to the OpenAI Fine-Tuned Agent for examples.

-

Create a Jitterbit custom API to expose the operation:

- Click the operation's actions menu and select Publish as an API.

- Configure the following settings: Method:

POSTand Response Type:System Variable. - Save the API service URL for configuring your chat interface. Your chat interface sends the request payload with the user's question to this service URL.

-

-

Create the AI logic workflow to handle user queries and return responses from the fine-tuned model. The following steps demonstrate the OpenAI implementation:

-

Click Add New Workflow to create a new workflow.

-

Rename the workflow to

Main - AI Agent Tools Logic. -

In the Project endpoints and connectors tab, drag an HTTP v2 Post activity from the HTTP v2 endpoint to the drop zone.

-

Add a transformation before the HTTP v2 POST activity. In this transformation, specify your fine-tuned model ID to direct queries to your trained model. The query sent to the LLM is the user's question from the payload received in the

API Request Handlerworkflow. Refer to the OpenAI Fine-Tuned Agent for examples. -

Add a transformation after the HTTP v2 POST activity to map the OpenAI response into a structured output format for your chat interface. Refer to the OpenAI Fine-Tuned Agent for examples.

-

-

Connect the workflows:

-

Return to the

API Request Handlerworkflow. -

Call the operation in the

Main - AI Agent Tools Logicworkflow using one of these methods:- Add a script that uses the

RunOperationfunction. - Configure an Invoke Operation (Beta) activity.

- Add a script that uses the

-

-

Configure your chat interface. This AI agent can work with various platforms including Slack, Microsoft Teams, microservices, SaaS apps like Salesforce, or applications built using Jitterbit App Builder. Configure your chosen platform and set the request URL to your Jitterbit custom API service URL.

Tip

For a Slack implementation example, see the OpenAI Fine-Tuned Agent in Jitterbit Marketplace.

-

Click the project's actions menu and select Deploy Project.